Two sample t-test

Independent sample t-test, two sample t-test

Difference of Two means Hypothesis test 등 같은 의미

Theory

가정

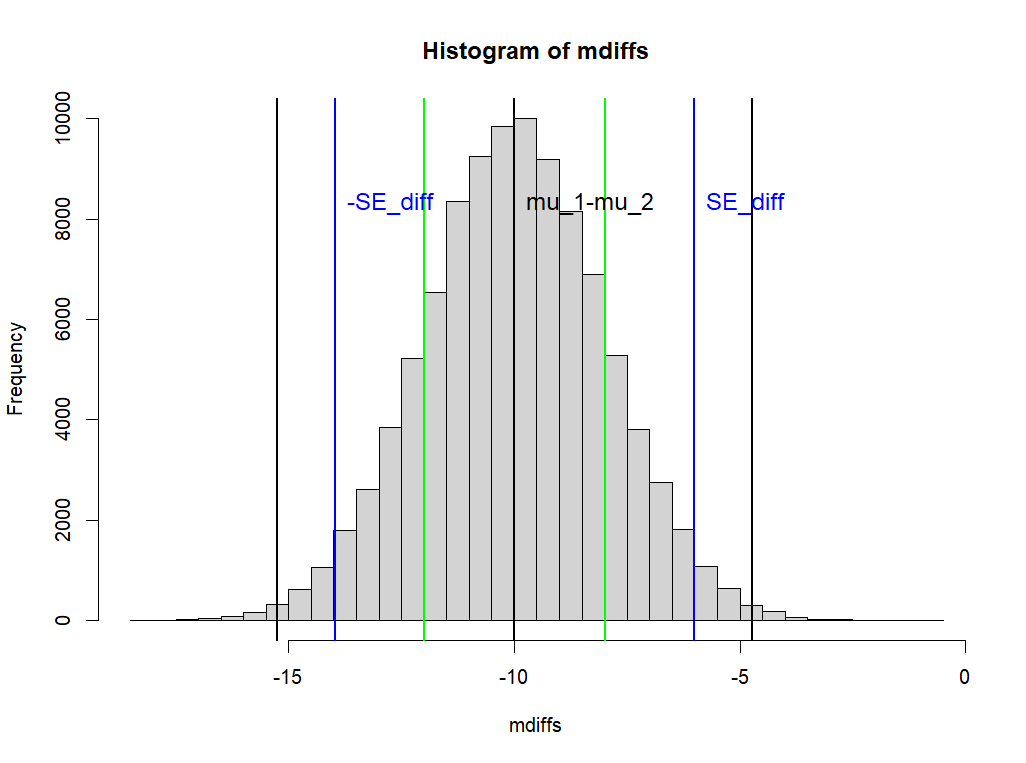

- 두 모집단 p1, p2 가 있다

- 각 집단에서 샘플을 취해서 그 평균을 구한 후

- 그 차이를 기록한다.

- 이것을 무한히 반복한다.

- 이렇게 해서 얻은 샘플평균차이를 모은 집합의 평균과 분산은 무엇이 될까?

우리는 이미

central limit theorem 문서와

statistical review 문서의 expected value and variance properties 와

mean and variance of the sample mean 문서를 통해서 아래를 알고 있다.

\begin{eqnarray*}

\overline{X} & \sim & \left( \mu, \;\; \frac{\sigma^2}{n} \right) \\

& & \text{in other words, } \\

E \left[ \overline{X} \right] & = & \mu \\

Var \left[ \overline{X} \right] & = & \frac{\sigma^2}{n} \\

& & \text {Assuming that X1 and X2 are independent } \\

\overline{X_{1}} & \sim & \left( \mu_{1}, \frac{\sigma^2_{1}}{n_{1}} \right) \\

\overline{X_{2}} & \sim & \left( \mu_{2}, \frac{\sigma^2_{2}}{n_{2}} \right) \\

& & \text{note that } n_{1}, n_{2} \text{ are sample size.} \\

& & \text{and } \\

& & \frac{\sigma^2_{1}}{n_{1}} = Var \left[ \overline{X_{1}} \right] \\

\end{eqnarray*}

두 샘플 평균들의 차이를 모아 놓은 집합의 (distribution of sample mean difference) 성격은 아래와 같을 것이다.

\begin{eqnarray*}

E \left[ \overline{X_{1}} - \overline{X_{2}} \right] & = & \mu_{1} - \mu_{2} \;, \;\;\; \text{and} \\

Var \left[ \overline{X_{1}} - \overline{X_{2}} \right] & = &

Var \left[ \overline{X_{1}} \right] + Var \left[ \overline{X_{2}} \right] \\

& = & \frac{\sigma^2_{1}}{n_{1}} + \frac{\sigma^2_{2}}{n_{2}} \\

\text{SE}_{\overline{X_{1}} - \overline{X_{2}}} & = & \text{SE}_{\text{diff}} \\

& = & \sqrt { \frac{\sigma^2_{1}}{n_{1}} + \frac{\sigma^2_{2}}{n_{2}} } \\

\\

& & \text{If variance of each population} \text{is unknown,} \\

& & \text{we use sample variances, instead of using } \sigma \text{.} \\

& & \text{If degrees of freedom for each group is different} \\

& & \text{we use the following method to obtain pooled variance, } \; \text{s}^{2}_{\text{p}}\\

\text{s}^{2}_{\text{p}} & = & \dfrac {\text{SS}_{1} + \text{SS}_{2}} {\text{df}_{1} + \text{df}_{2} } \\

& & \text{Hence, } \\

\text{SE}_{\text{diff}} & = & \sqrt {\frac{\text{s}^{2}_{\text{p}}}{n_1} + \frac{\text{s}^{2}_{\text{p}}}{n_2} } \\

\end{eqnarray*}

rm(list=ls())

rnorm2 <- function(n,mean,sd){

mean+sd*scale(rnorm(n))

}

ss <- function(x) {

sum((x-mean(x))^2)

}

N.p <- 1000000

m.p <- 100

sd.p <- 10

set.seed(101)

p1 <- rnorm2(N.p, m.p, sd.p)

mean(p1)

sd(p1)

p2 <- rnorm2(N.p, m.p+10, sd.p)

mean(p2)

sd(p2)

sz1 <- sz2 <- 50

df1 <- sz1 - 1

df2 <- sz2 - 1

df.tot <- df1 + df2

iter <- 100000

mdiffs <- rep(NA, iter)

means.s1 <- rep(NA, iter)

means.s2 <- rep(NA, iter)

tail(mdiffs)

for (i in 1:iter) {

# means <- append(means, mean(sample(p1, s.size, replace = T)))

s1 <- sample(p1, sz1, replace = T)

s2 <- sample(p2, sz2, replace = T)

means.s1[i] <- mean(s1)

means.s2[i] <- mean(s2)

mdiffs[i] <- mean(s1)-mean(s2)

}

# 정리, 증명에 의한 계산

mu <- mean(p1) - mean(p2)

# var(x1bar-x2bar) = var(x1bar) + var(x2bar)

# var(x1bar) = var(x1)/n, n = sample size

ms <- var(p1)/sz1 + var(p2)/sz2

se <- sqrt(ms)

mu

ms

se

# 시뮬레이션에 의한 집합 (distribution)

# mdiffs

m.diff <- mean(mdiffs)

var.diff <- var(mdiffs)

sd.diff <- sd(mdiffs)

m.diff

var.diff

sd.diff

var(means.s1)

var(p1)/sz1

var(means.s2)

var(p2)/sz2

var(means.s1-means.s2)

var(means.s1) + var(means.s2) - 2 * cov(means.s1, means.s2)

# 두 집합이 완전히 독립적일 때 cov = 0 이므로

var(p1)/sz1 + var(p2)/sz2

var.diff <- (var(p1)/sz1) + (var(p2)/sz2)

var.diff

sqrt(var.diff)

se.diff <- sqrt(var.diff)

se.diff

# 이것을 그래프로 그려보면

hist(mdiffs, breaks=50)

abline(v=mean(mdiffs),

col="black", lwd=2)

se.diff

one <- qt(1-(.32/2), df=df.tot)

two <- qt(1-(.05/2), df=df.tot)

thr <- qt(1-(.01/2), df=df.tot)

ci68 <- se.diff*one

ci95 <- se.diff*two

ci99 <- se.diff*thr

abline(v=c(m.diff-ci68, m.diff-ci95, m.diff-ci99,

m.diff+ci68, m.diff+ci95, m.diff+ci99),

col=c("green", "blue", "black"), lwd=2)

text(x=m.diff, y=iter/12,

labels=paste("mu_1-mu_2"),

col="black", pos = 4, cex=1.2)

text(x=m.diff-ci95, y=iter/12,

labels=paste("-SE_diff"),

col="blue", pos = 4, cex=1.2)

text(x=m.diff+ci95, y=iter/12,

labels=paste("SE_diff"),

col="blue", pos = 4, cex=1.2)