c:itamc:2018

Table of Contents

Intro. to the media content, advanced

Introduction

Introduction to social network analysis

data file: textmining.zip

create Textmining directory in R working directory.

Unzip the zip file.

Text mining e.g. 1

NeededPackages <- c("tm", "SnowballC", "RColorBrewer", "ggplot2", "wordcloud", "biclust",

"cluster", "igraph", "fpc")

install.packages(NeededPackages, dependencies = TRUE)

Sys.setlocale(category = "LC_ALL", locale = "US")

library(tm)

#Create Corpus

docs <- Corpus(DirSource("D:/Users/Hyo/Documents/TextMining"))

docs

#inspect a particular document writeLines(as.character(docs[[30]]))

getTransformations()

#create the toSpace content transformer

toSpace <- content_transformer(function(x, pattern) {return (gsub(pattern, " ", x))})

docs <- tm_map(docs, toSpace, "-") docs <- tm_map(docs, toSpace, ":")

#Remove punctuation ? replace punctuation marks with " " docs <- tm_map(docs, removePunctuation) docs <- tm_map(docs, toSpace, "’") docs <- tm_map(docs, toSpace, "‘") docs <- tm_map(docs, toSpace, " -")

#Transform to lower case (need to wrap in content_transformer) docs <- tm_map(docs,content_transformer(tolower))

#Strip digits (std transformation, so no need for content_transformer) docs <- tm_map(docs, removeNumbers)

#remove stopwords using the standard list in tm

docs <- tm_map(docs, removeWords, stopwords("english"))

#Strip whitespace (cosmetic?) docs <- tm_map(docs, stripWhitespace)

writeLines(as.character(docs[[30]]))

#load library library(SnowballC) #Stem document docs <- tm_map(docs,stemDocument) writeLines(as.character(docs[[30]]))

docs <- tm_map(docs, content_transformer(gsub), pattern = "organiz", replacement = "organ") docs <- tm_map(docs, content_transformer(gsub), pattern = "organis", replacement = "organ") docs <- tm_map(docs, content_transformer(gsub), pattern = "andgovern", replacement = "govern") docs <- tm_map(docs, content_transformer(gsub), pattern = "inenterpris", replacement = "enterpris") docs <- tm_map(docs, content_transformer(gsub), pattern = "team-", replacement = "team")

dtm <- DocumentTermMatrix(docs) dtm

inspect(dtm[1:2,1000:1005]) inspect(dtm)

freq <- colSums(as.matrix(dtm))

#length should be total number of terms length(freq)

#create sort order (descending) ord <- order(freq, decreasing=TRUE)

#inspect most frequently occurring terms freq[head(ord)] #inspect least frequently occurring terms freq[tail(ord)]

# word length 4 or more dtmr <-DocumentTermMatrix(docs, control=list(wordLengths=c(4, 20), bounds = list(global = c(3,27))))

dtmr inspect(dtmr)

freqr <- colSums(as.matrix(dtmr)) #length should be total number of terms length(freqr) #create sort order (asc) ordr <- order(freqr,decreasing=TRUE) #inspect most frequently occurring terms freqr[head(ordr)] #inspect least frequently occurring terms freqr[tail(ordr)]

findFreqTerms(dtmr,lowfreq=80)

findAssocs(dtmr, "project", 0.6) findAssocs(dtmr, "enterpris", 0.6) findAssocs(dtmr, "system", 0.6)

wf=data.frame(term=names(freqr),occurrences=freqr) library(ggplot2) p <- ggplot(subset(wf, freqr>100), aes(term, occurrences)) p <- p + geom_bar(stat="identity") p <- p + theme(axis.text.x=element_text(angle=45, hjust=1)) p

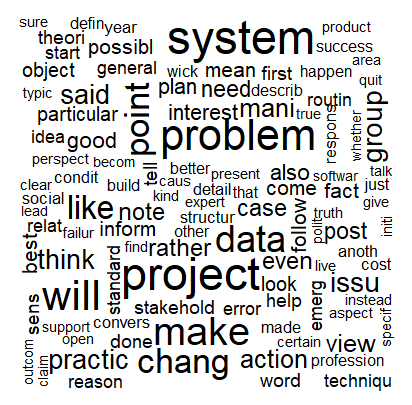

#wordcloud library(wordcloud) #setting the same seed each time ensures consistent look across clouds set.seed(42) #limit words by specifying min frequency wordcloud(names(freqr),freqr, min.freq=30)

#…add color wordcloud(names(freqr),freqr,min.freq=30,colors=brewer.pal(6,"Dark2"))

output

> library(tm)

필요한 패키지를 로딩중입니다: NLP

다음의 패키지를 부착합니다: ‘NLP’

The following object is masked from ‘package:ggplot2’:

annotate

Warning messages:

1: 패키지 ‘tm’는 R 버전 3.4.4에서 작성되었습니다

2: 패키지 ‘NLP’는 R 버전 3.4.4에서 작성되었습니다

> #Create Corpus

> docs <- Corpus(DirSource("D:/Users/Hyo/Documents/TextMining"))

> docs

<<SimpleCorpus>>

Metadata: corpus specific: 1, document level (indexed): 0

Content: documents: 31

> #inspect a particular document

> writeLines(as.character(docs[[30]]))

TOGAF or not TOGAF but is that the question?

"The Holy Grail of effective collaboration is creating shared understanding, which is a precursor to shared commitment." <96> Jeff Conklin.

"Without context, words and actions have no meaning at all." <96> Gregory Bateson.

I spent much of last week attending a class on the TOGAF Enterprise Architecture (EA) framework. Prior experience with IT frameworks such as PMBOK and ITIL had taught me that much depends on the instructor <96> a good one can make the material come alive whereas a not-so-good one can make it an experience akin to watching grass grow. I neednt have worried: the instructor was superb, and my classmates, all of whom are experienced IT professionals / architects, livened up the proceedings through comments and discussions both in class and outside it. All in all, it was a thoroughly enjoyable and educative experience, something I cannot say for many of the professional courses I have attended.

One of the things about that struck me about TOGAF is the way in which the components of the framework hang together to make a coherent whole (see the introductory chapter of the framework for an overview). To be sure, there is a lot of detail within those components, but there is a certain abstract elegance <96> dare I say, beauty <96> to the framework.

That said TOGAF is (almost) entirely silent on the following question which I addressed in a post late last year:

Why is Enterprise Architecture so hard to get right?

Many answers have been offered. Here are some, extracted from articles published by IT vendors and consultancies:

Lack of sponsorship

Not engaging the business

Inadequate communication

Insensitivity to culture / policing mentality

Clinging to a particular tool or framework

Building an ivory tower

Wrong choice of architect

(Note: the above points are taken from this article and this one)

It is interesting that the first four issues listed are related to the fact that different stakeholders in an organization have vastly different perspectives on what an enterprise architecture initiative should achieve. This lack of shared understanding is what makes enterprise architecture a socially complex problem rather than a technically difficult one. As Jeff Conklin points out in this article, problems that are technically complex will usually have a solution that will be acceptable to all stakeholders, whereas socially complex problems will not. Sending a spacecraft to Mars is an example of the former whereas an organization-wide ERP (or EA!) project or (on a global scale) climate change are instances of the latter.

Interestingly, even the fifth and sixth points in the list above <96> framework dogma and retreating to an ivory tower <96> are usually consequences of the inability to manage social complexity. Indeed, that is precisely the point made in the final item in the list: enterprise architects are usually selected for their technical skills rather than their ability to deal with ambiguities that are characteristic of social complexity.

TOGAF offers enterprise architects a wealth of tools to manage technical complexity. These need to be complemented by a suite of techniques to reconcile worldviews of different stakeholder groups. Some examples of such techniques are Soft Systems Methodology, Polarity Management, and Dialogue Mapping. I wont go into details of these here, but if youre interested, please have a look at my posts entitled, The Approach <96> a dialogue mapping story and The dilemmas of enterprise IT for brief introductions to the latter two techniques via IT-based examples.

<Advertisement > Better yet, you could check out Chapter 9 of my book for a crash course on Soft Systems Methodology and Polarity Management and Dialogue Mapping, and the chapters thereafter for a deep dive into Dialogue Mapping </Advertisement>.

Apart from social complexity, there is the problem of context <96> the circumstances that shape the unique culture and features of an organization. As I mentioned in my introductory remarks, the framework is abstract <96> it applies to an ideal organization in which things can be done by the book. But such an organization does not exist! Aside from unique people-related and political issues, all organisations have their own quirks and unique features that distinguish them from other organisations, even within the same domain. Despite superficial resemblances, no two pharmaceutical companies are alike. Indeed, the differences are the whole point because they are what make a particular organization what it is. To paraphrase the words of the anthropologist, Gregory Bateson, the differences are what make a difference.

Some may argue that the framework acknowledges this and encourages, even exhorts, people to tailor the framework to their needs. Sure, the word "tailor" and its variants appear almost 700 times in the version 9.1 of the standard but, once again, there is no advice offered on how this tailoring should be done. And one can well understand why: it is impossible to offer any sensible advice if one doesnt know the specifics of the organization, which includes its context.

On a related note, the TOGAF framework acknowledges that there is a hierarchy of architectures ranging from the general (foundation) to the specific (organization). However despite the acknowledgement of diversity, in practice TOGAF tends to focus on similarities between organisations. Most of the prescribed building blocks and processes are based on assumed commonalities between the structures and processes in different organisations. My point is that, although similarities are important, architects need to focus on differences. These could be differences between the organization they are working in and the TOGAF ideal, or even between their current organization and others that they have worked with in the past (and this is where experience comes in really handy). Cataloguing and understanding these unique features <96> the differences that make a difference <96> draws attention to precisely those issues that can cause heartburn and sleepless nights later.

I have often heard arguments along the lines of "80% of what we do follows a standard process, so it should be easy for us to standardize on a framework." These are famous last words, because some of the 20% that is different is what makes your organization unique, and is therefore worthy of attention. You might as well accept this upfront so that you get a realistic picture of the challenges early in the game.

To sum up, frameworks like TOGAF are abstractions based on an ideal organization; they gloss over social complexity and the unique context of individual organisations. So, questions such as the one posed in the title of this post are akin to the pseudo-choice between Coke and Pepsi, for the real issue is something else altogether. As Tom Graves tells us in his wonderful blog and book, the enterprise is a story rather than a structure, and its architecture an ongoing sociotechnical drama.

> getTransformations()

[1] "removeNumbers" "removePunctuation" "removeWords"

[4] "stemDocument" "stripWhitespace"

> #create the toSpace content transformer

> toSpace <- content_transformer(function(x, pattern) {return (gsub(pattern, " ", x))})

> docs <- tm_map(docs, toSpace, "-")

> docs <- tm_map(docs, toSpace, ":")

> #Remove punctuation ? replace punctuation marks with " "

> docs <- tm_map(docs, removePunctuation)

>

> docs <- tm_map(docs, toSpace, "’")

> docs <- tm_map(docs, toSpace, "‘")

> docs <- tm_map(docs, toSpace, " -")

> #Transform to lower case (need to wrap in content_transformer)

> docs <- tm_map(docs,content_transformer(tolower))

> #Strip digits (std transformation, so no need for content_transformer)

> docs <- tm_map(docs, removeNumbers)

> #remove stopwords using the standard list in tm

> docs <- tm_map(docs, removeWords, stopwords("english"))

> #Strip whitespace (cosmetic?)

> docs <- tm_map(docs, stripWhitespace)

> writeLines(as.character(docs[[30]]))

togaf togaf question holy grail effective collaboration creating shared understanding precursor shared commitment jeff conklin without context words actions meaning gregory bateson spent much last week attending class togaf enterprise architecture ea framework prior experience frameworks pmbok itil taught much depends instructor good one can make material come alive whereas good one can make experience akin watching grass grow neednt worried instructor superb classmates experienced professionals architects livened proceedings comments discussions class outside thoroughly enjoyable educative experience something say many professional courses attended one things struck togaf way components framework hang together make coherent whole see introductory chapter framework overview sure lot detail within components certain abstract elegance dare say beauty framework said togaf almost entirely silent following question addressed post late last year enterprise architecture hard get right many an... <truncated>

> #load library

> library(SnowballC)

Warning message:

패키지 ‘SnowballC’는 R 버전 3.4.4에서 작성되었습니다

>

> #Stem document

> docs <- tm_map(docs,stemDocument)

> writeLines(as.character(docs[[30]]))

togaf togaf question holi grail effect collabor creat share understand precursor share commit jeff conklin without context word action mean gregori bateson spent much last week attend class togaf enterpris architectur ea framework prior experi framework pmbok itil taught much depend instructor good one can make materi come aliv wherea good one can make experi akin watch grass grow neednt worri instructor superb classmat experienc profession architect liven proceed comment discuss class outsid thorough enjoy educ experi someth say mani profession cours attend one thing struck togaf way compon framework hang togeth make coher whole see introductori chapter framework overview sure lot detail within compon certain abstract eleg dare say beauti framework said togaf almost entir silent follow question address post late last year enterpris architectur hard get right mani answer offer extract articl publish vendor consult lack sponsorship engag busi inadequ communic insensit cultur polic menta... <truncated>

> docs <- tm_map(docs, content_transformer(gsub), pattern = "organiz", replacement = "organ")

> docs <- tm_map(docs, content_transformer(gsub), pattern = "organis", replacement = "organ")

> docs <- tm_map(docs, content_transformer(gsub), pattern = "andgovern", replacement = "govern")

> docs <- tm_map(docs, content_transformer(gsub), pattern = "inenterpris", replacement = "enterpris")

> docs <- tm_map(docs, content_transformer(gsub), pattern = "team-", replacement = "team")

> dtm <- DocumentTermMatrix(docs)

> dtm

<<DocumentTermMatrix (documents: 31, terms: 3892)>>

Non-/sparse entries: 13977/106675

Sparsity : 88%

Maximal term length: 54

Weighting : term frequency (tf)

> inspect(dtm[1:2,1000:1005])

<<DocumentTermMatrix (documents: 2, terms: 6)>>

Non-/sparse entries: 0/12

Sparsity : 100%

Maximal term length: 12

Weighting : term frequency (tf)

Sample :

Terms

Docs decid decis defineeffici

BeyondEntitiesAndRelationships.txt 0 0 0

bigdata.txt 0 0 0

Terms

Docs degre demand devoid

BeyondEntitiesAndRelationships.txt 0 0 0

bigdata.txt 0 0 0

> inspect(dtm)

<<DocumentTermMatrix (documents: 31, terms: 3892)>>

Non-/sparse entries: 13977/106675

Sparsity : 88%

Maximal term length: 54

Weighting : term frequency (tf)

Sample :

Terms

Docs can manag one organ

BeyondEntitiesAndRelationships.txt 26 8 15 8

ConditionsOverCauses.txt 8 9 7 14

EmergentDesignInEnterpriseIT.txt 15 6 28 8

FromInformationToKnowledge.txt 35 7 17 9

MakingSenseOfOrganizationalChange.txt 15 10 26 27

MakingSenseOfSensemaking.txt 25 7 26 12

RoutinesAndReality.txt 8 3 13 10

SixHeresiesForBI.txt 6 3 10 7

TheEssenceOfEntrepreneurship.txt 5 2 24 2

ThreeTypesOfUncertainty.txt 13 9 18 3

Terms

Docs problem project system

BeyondEntitiesAndRelationships.txt 5 1 6

ConditionsOverCauses.txt 5 2 4

EmergentDesignInEnterpriseIT.txt 16 17 13

FromInformationToKnowledge.txt 16 4 21

MakingSenseOfOrganizationalChange.txt 15 7 6

MakingSenseOfSensemaking.txt 12 19 9

RoutinesAndReality.txt 6 4 36

SixHeresiesForBI.txt 4 0 4

TheEssenceOfEntrepreneurship.txt 5 1 0

ThreeTypesOfUncertainty.txt 15 1 0

Terms

Docs use way work

BeyondEntitiesAndRelationships.txt 18 9 0

ConditionsOverCauses.txt 1 3 13

EmergentDesignInEnterpriseIT.txt 14 11 4

FromInformationToKnowledge.txt 25 8 10

MakingSenseOfOrganizationalChange.txt 10 27 25

MakingSenseOfSensemaking.txt 27 22 13

RoutinesAndReality.txt 16 9 13

SixHeresiesForBI.txt 9 2 5

TheEssenceOfEntrepreneurship.txt 6 30 10

ThreeTypesOfUncertainty.txt 3 4 7

> freq <- colSums(as.matrix(dtm))

> #length should be total number of terms

> length(freq)

[1] 3892

> #create sort order (descending)

> ord <- order(freq, decreasing=TRUE)

> #inspect most frequently occurring terms

> freq[head(ord)]

one organ can manag work system

325 276 244 230 210 193

>

> #inspect least frequently occurring terms

> freq[tail(ord)]

therebi timeorgan uncommit unionist willing workday

1 1 1 1 1 1

> # word length 4 or more

> dtmr <-DocumentTermMatrix(docs, control=list(wordLengths=c(4, 20), bounds = list(global = c(3,27))))

> dtmr

<<DocumentTermMatrix (documents: 31, terms: 1295)>>

Non-/sparse entries: 10082/30063

Sparsity : 75%

Maximal term length: 15

Weighting : term frequency (tf)

> inspect(dtmr)

<<DocumentTermMatrix (documents: 31, terms: 1295)>>

Non-/sparse entries: 10082/30063

Sparsity : 75%

Maximal term length: 15

Weighting : term frequency (tf)

Sample :

Terms

Docs differ exampl manag

BeyondEntitiesAndRelationships.txt 14 6 8

ConditionsOverCauses.txt 2 5 9

EmergentDesignInEnterpriseIT.txt 11 8 6

FromInformationToKnowledge.txt 7 21 7

MakingSenseOfOrganizationalChange.txt 4 10 10

MakingSenseOfSensemaking.txt 17 15 7

RoutinesAndReality.txt 7 2 3

SixHeresiesForBI.txt 2 7 3

TheEssenceOfEntrepreneurship.txt 15 15 2

ThreeTypesOfUncertainty.txt 6 15 9

Terms

Docs organ problem project

BeyondEntitiesAndRelationships.txt 8 5 1

ConditionsOverCauses.txt 14 5 2

EmergentDesignInEnterpriseIT.txt 8 16 17

FromInformationToKnowledge.txt 9 16 4

MakingSenseOfOrganizationalChange.txt 27 15 7

MakingSenseOfSensemaking.txt 12 12 19

RoutinesAndReality.txt 10 6 4

SixHeresiesForBI.txt 7 4 0

TheEssenceOfEntrepreneurship.txt 2 5 1

ThreeTypesOfUncertainty.txt 3 15 1

Terms

Docs question system will

BeyondEntitiesAndRelationships.txt 19 6 12

ConditionsOverCauses.txt 0 4 5

EmergentDesignInEnterpriseIT.txt 8 13 11

FromInformationToKnowledge.txt 16 21 5

MakingSenseOfOrganizationalChange.txt 10 6 12

MakingSenseOfSensemaking.txt 63 9 18

RoutinesAndReality.txt 2 36 8

SixHeresiesForBI.txt 4 4 6

TheEssenceOfEntrepreneurship.txt 0 0 10

ThreeTypesOfUncertainty.txt 2 0 6

Terms

Docs work

BeyondEntitiesAndRelationships.txt 0

ConditionsOverCauses.txt 13

EmergentDesignInEnterpriseIT.txt 4

FromInformationToKnowledge.txt 10

MakingSenseOfOrganizationalChange.txt 25

MakingSenseOfSensemaking.txt 13

RoutinesAndReality.txt 13

SixHeresiesForBI.txt 5

TheEssenceOfEntrepreneurship.txt 10

ThreeTypesOfUncertainty.txt 7

> freqr <- colSums(as.matrix(dtmr))

> #length should be total number of terms

> length(freqr)

[1] 1295

>

> #create sort order (asc)

> ordr <- order(freqr,decreasing=TRUE)

>

> #inspect most frequently occurring terms

> freqr[head(ordr)]

organ manag work system project problem

276 230 210 193 188 174

>

> #inspect least frequently occurring terms

> freqr[tail(ordr)]

hmmm struck multin lower pseudo gloss

3 3 3 3 3 3

> findFreqTerms(dtmr,lowfreq=80)

[1] "action" "approach" "base" "busi"

[5] "data" "design" "develop" "differ"

[9] "discuss" "enterpris" "exampl" "group"

[13] "howev" "import" "issu" "make"

[17] "manag" "mani" "model" "often"

[21] "organ" "peopl" "point" "practic"

[25] "problem" "process" "project" "question"

[29] "said" "situat" "system" "thing"

[33] "think" "time" "understand" "view"

[37] "well" "will" "work" "chang"

[41] "consult" "decis" "even" "like"

> findAssocs(dtmr, "project", 0.6)

$project

inher manag occurr handl experienc

0.80 0.68 0.66 0.66 0.60

> findAssocs(dtmr, "enterpris", 0.6)

$enterpris

agil increment realist upfront technolog

0.81 0.79 0.77 0.76 0.69

solv neither movement adapt architect

0.68 0.68 0.66 0.66 0.65

architectur chanc fine featur

0.65 0.64 0.64 0.62

> findAssocs(dtmr, "system", 0.6)

$system

design subset adopt user involv specifi function

0.78 0.78 0.77 0.75 0.72 0.71 0.70

intend step softwar specif intent compos depart

0.68 0.68 0.68 0.67 0.66 0.66 0.65

phone frequent author wherea pattern cognit

0.64 0.63 0.61 0.61 0.61 0.60

> wf=data.frame(term=names(freqr),occurrences=freqr)

> library(ggplot2)

> p <- ggplot(subset(wf, freqr>100), aes(term, occurrences))

> p <- p + geom_bar(stat="identity")

> p <- p + theme(axis.text.x=element_text(angle=45, hjust=1))

> p

> #wordcloud

> library(wordcloud)

필요한 패키지를 로딩중입니다: RColorBrewer

Warning messages:

1: 패키지 ‘wordcloud’는 R 버전 3.4.4에서 작성되었습니다

2: 패키지 ‘RColorBrewer’는 R 버전 3.4.4에서 작성되었습니다

> #setting the same seed each time ensures consistent look across clouds

> set.seed(42)

> #limit words by specifying min frequency

> wordcloud(names(freqr),freqr, min.freq=30)

There were 50 or more warnings (use warnings() to see the first 50)

> #…add color

> wordcloud(names(freqr),freqr,min.freq=30,colors=brewer.pal(6,"Dark2"))

There were 50 or more warnings (use warnings() to see the first 50)

>

c/itamc/2018.txt · Last modified: by hkimscil